Part 9 of 10 in Fully Functional Factory

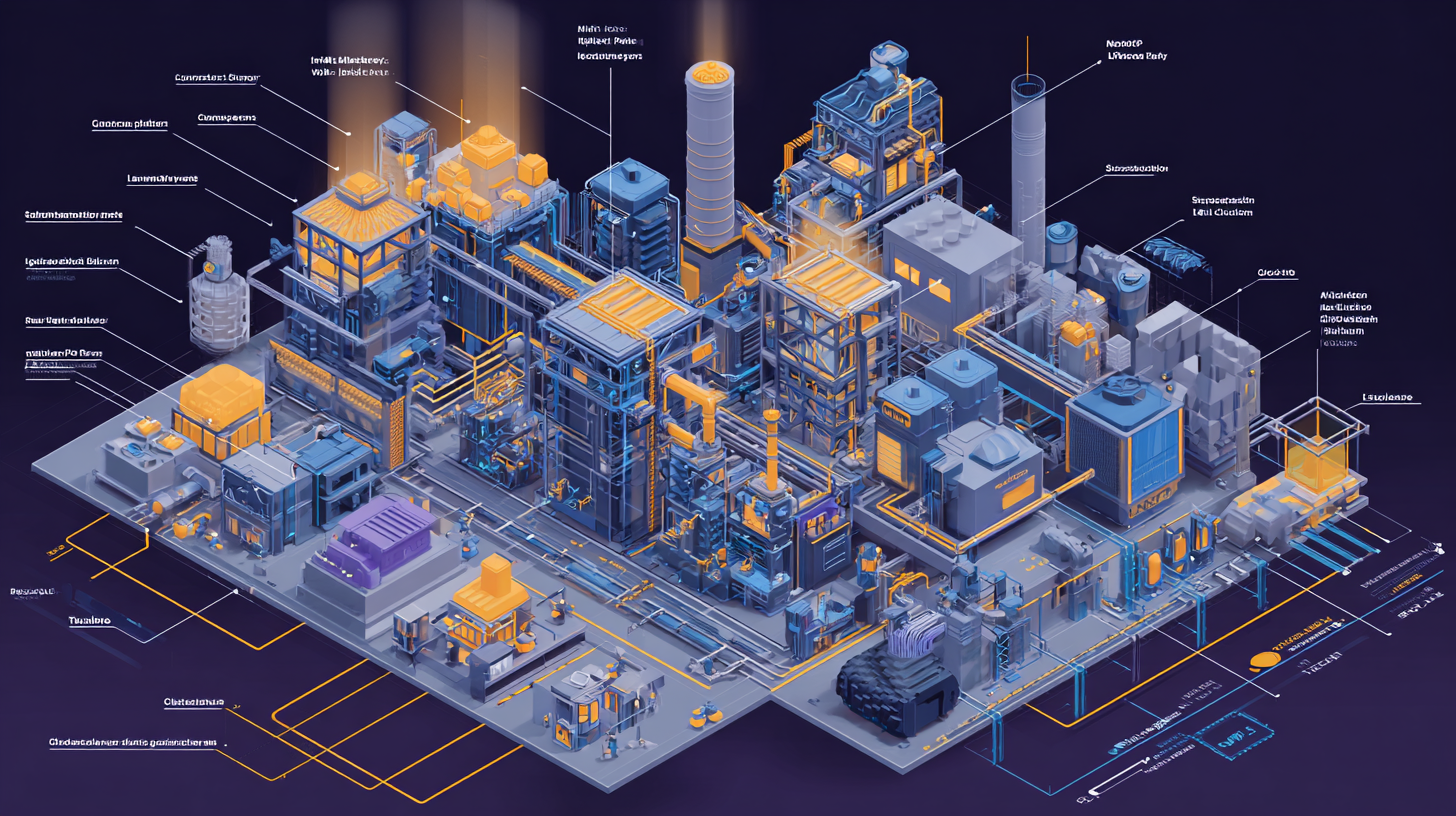

Architecture as Code: The Living Architecture

You've defined your system in three meta-languages:

- APIs (Smithy, OpenAPI, etc.) — What operations exist, what they accept, what they return

- Data models (Capacitor, Prisma, etc.) — What entities exist, what fields they have, how they relate

- UI components (Flux, Storybook, etc.) — What interfaces exist, what interactions they support

But there's a fourth definition that's been missing.

The one that describes how all these pieces fit together. The one that says "User Service talks to Auth Service" and "Payment Service must never directly call Notification Service."

The architecture itself.

The Documentation Problem

Most organizations document architecture in one of these ways:

1. The Miro board — Created during a design review six months ago. Half the boxes reference services that got renamed. The arrows show dependencies that changed. Nobody updates it.

2. The Confluence page — A prose document with some embedded diagrams. "The User Service communicates with the Auth Service via REST. Exception: batch jobs use a message queue." Written in March. It's now November. The batch jobs use gRPC now. The doc still says REST.

3. The wiki graveyard — Seventeen pages titled "Architecture Overview," each from a different quarter, each contradicting the others. Nobody knows which one is current.

4. Tribal knowledge — "Ask Sarah, she knows how this works." Sarah left last month.

5. The whiteboard photo — Someone took a picture of the architecture discussion. The photo is blurry. The whiteboard was erased an hour later.

This isn't anyone's fault. Architecture documentation is hard because architecture changes constantly.

Every new service, every refactored dependency, every moved responsibility is a change to the architecture. And every change requires someone to:

- Remember to update the documentation

- Find the documentation

- Figure out which diagram/page/section to update

- Update it in a way that doesn't conflict with other ongoing changes

- Get it reviewed and approved

By the time you've done all that, the architecture changed again.

So the docs drift. Slowly at first, then faster. Within six months, they're aspirational at best and misleading at worst.

Why This Breaks Checker #10

Remember Checker #10: Architectural Consistency?

It's supposed to verify that generated code follows architectural design decisions:

- Does the code respect service boundaries?

- Does it use the prescribed patterns?

- Does it follow the data flow described in the architecture?

But how can it check against architecture documentation that's six months out of date?

The LLM reads your Architecture Document and sees "Payment Service communicates with Notification Service via message queue."

The generated code calls Notification Service directly via REST.

Is that a violation? Or did the architecture change and the doc wasn't updated?

The LLM can't tell. It flags it as a potential issue. You investigate. Turns out the architecture did change—three weeks ago, in a different PR. The doc just wasn't updated.

False positive. Trust in the checker erodes.

Or worse: the doc is wrong in the other direction. It says "Service A can call Service B directly." Service A shouldn't call Service B directly (there's a security boundary). But the doc hasn't been updated since the security model changed.

The checker passes. The bug ships. Real security issue.

This is why Checker #10 is hard. Architecture documentation lies.

Not intentionally. It just drifts.

The Fourth Meta-Language

Here's the insight that changes everything:

What if architecture wasn't documentation? What if it was a definition?

Just like you define APIs in Smithy (not Word docs), and data models in Capacitor (not Excel sheets), and UI components in Flux (not Figma screenshots):

What if you defined architecture in code?

Not "code that implements the architecture." Code that defines the architecture. Machine-readable. Queryable. Version-controlled. The source of truth.

This is Architecture as Code (AAC).

What AAC Should Be

A proper architecture-as-code system needs five properties:

1. Machine-Readable

Not a diagram. Not prose. A structured definition that can be parsed, queried, and validated programmatically.

Example (simplified):

component UserService {

technology = "Node.js"

deployed_to = Kubernetes

exposes API {

endpoint = "https://api.example.com/users"

protocol = REST

}

depends_on AuthService {

relationship = "authenticates users via"

protocol = REST

}

depends_on UserDB {

relationship = "stores user data in"

protocol = PostgreSQL

}

}

component PaymentService {

technology = "Java"

deployed_to = Kubernetes

exposes API {

endpoint = "https://api.example.com/payments"

protocol = REST

}

depends_on UserService {

relationship = "fetches user details from"

protocol = REST

}

// Constraint: MUST NOT depend on NotificationService directly

constraint no_direct_notification {

forbidden = depends_on NotificationService

reason = "Use EventBus for notifications to maintain separation"

}

}

This is queryable:

- "Which services depend on UserService?" →

PaymentService - "What protocol does PaymentService use to talk to UserService?" →

REST - "Does PaymentService ever directly call NotificationService?" →

No (forbidden by constraint)

This can be validated against actual code. Import analysis, network telemetry, traced requests—all compared against this definition.

2. Always Current

The definition is generated from the actual running system. Not "drawn by an architect in a meeting." Derived from reality.

Options:

- Option A (Top-Down): Engineers define architecture in AAC. The factory validates code against it. Violations block deployment.

- Option B (Bottom-Up): AAC is auto-generated from deployed services (their dependencies, protocols, exposed APIs). The definition reflects reality, always.

- Option C (Hybrid): Architects define intent in AAC. Auto-discovery fills in implementation details. Checker validates that implementation matches intent.

Most organizations want Option C: "We define the boundaries and constraints. The system fills in the details. The checker enforces the rules."

3. Queryable with Natural Language

Engineers should be able to ask:

- "Which services depend on the Auth Service?"

- "What happens if the UserDB goes down?"

- "Show me all services tagged #critical"

- "What's the blast radius if we change the User API?"

And get instant, accurate answers. Not "go read the wiki and grep through code and ask around."

This is where AI agents + structured architecture shine. The LLM queries the AAC model using a structured API, gets precise data, and answers in natural language.

4. Visualizable in Infinite Dimensions

Architecture isn't one diagram. It's many views:

- Component view: What services exist?

- Deployment view: Where do they run? (Kubernetes, Lambda, EC2)

- Dependency view: What talks to what?

- Data flow view: How does data move through the system?

- Security view: What are the trust boundaries?

- Ownership view: Which team owns which service?

Traditional diagrams force you to pick one view. AAC lets you generate any view on demand.

"Show me the deployment topology." Click. "Now show me only the #critical services." Click. "Now highlight services that depend on external APIs." Click.

Same data, infinite visualizations.

5. Temporal and Forward-Looking

Architecture changes over time. AAC should let you:

See the past:

- "What did the architecture look like last quarter?"

- "When did Service A start depending on Service B?"

- "Show me the commit that added the MessageQueue between Payment and Notification"

Track the present:

- "Which services changed this week?"

- "Alert me when a new dependency is added to #critical services"

Plan the future:

- Define your Long Term Architecture (LTA) as a high-level AAC

- Continuously measure the delta between current state and LTA

- "We want to move from monolith to microservices. Show me the gap analysis."

- "We want to migrate from REST to gRPC. Which services haven't migrated yet?"

This is living architecture. It breathes with your system.

How AAC Transforms the Factory

Remember the five checkers marked ⭐ in the checker registry? These are the ones that become dramatically more powerful with structured architecture.

Let's see how:

Checker #10: Architectural Consistency ⭐⭐⭐ (Biggest Impact)

Without AAC:

- LLM reads prose architecture document

- Interprets it (slowly, ambiguously)

- Compares generated code against interpreted intent

- High false positive rate (doc is outdated)

- High false negative rate (ambiguous prose)

- Expensive (full LLM analysis every time)

With AAC:

- Programmatic diff: declared relationships in

.c4vs. actual import statements in code - "PaymentService defines

depends_on UserService. Does the generated code import UserService? Yes. ✓" - "PaymentService defines

forbidden = depends_on NotificationService. Does the code import NotificationService? Yes. ❌ Block deployment." - Fast (milliseconds), deterministic, zero tokens

- LLM only needed for nuanced behavioral patterns that go beyond structure

This checker shifts from 100% LLM (expensive, slow) to 80% programmatic + 20% LLM (fast, cheap, accurate).

Checker #9: Requirement Traceability ⭐

Without AAC:

- LLM reads PRD, reads code, tries to match requirements to implementation

- Per-ticket visibility only

With AAC:

- Requirements are linked to components in the AAC model

- "Requirement REQ-47: User can update profile. Implementing component: UserService.ProfileUpdate endpoint."

- Query: "Which requirements have no implementing component?" → Cross-system visibility, not just per-ticket

You can now trace requirements across the entire architecture, not just the service you're building.

Checker #14: Escaped Defect Analyzer ⭐

Without AAC:

- Root cause analysis requires querying multiple disconnected data stores

- "Was it the code? The deployment? The infrastructure? Let me check CloudWatch, then Grafana, then Git..."

- LLM has to assemble context manually in the prompt

With AAC:

- Checker outputs are annotated against the AAC model

- "Bug in UserService.ProfileUpdate → Query AAC → See it depends on AuthService and UserDB → Check metrics for both → AuthService latency spiked at incident time → Root cause: AuthService"

- The LLM reasons over a unified structure, not disconnected logs

Root cause analysis becomes systematic, not archeological.

Checker #18: Chaos Resilience ⭐

Without AAC:

- LLM reads prose architecture: "The Payment Service calls the Notification Service... I think?"

- Designs generic chaos experiments: "What if we add latency?"

With AAC:

- LLM queries AAC: "PaymentService depends on [UserService, PaymentDB, MessageQueue]"

- LLM sees metadata:

#critical,#stateful,deployed_to=Kubernetes - Designs targeted experiments: "PaymentService is #critical. Let's test: UserService timeout, PaymentDB connection loss, MessageQueue unavailable. Priority: high."

Chaos experiments go from generic to surgical.

Checker #16: Documentation Freshness ⭐

Without AAC:

- Compare prose documentation against code (ambiguous)

- Manually verify OpenAPI specs match actual endpoints, README setup instructions work

- "The doc says 'REST.' Is that still true? Let me grep imports..."

With AAC:

- The

.c4model is the truth - Prose documentation, OpenAPI specs, and README architecture sections are generated from the AAC model

- Drift detection: "The AAC model says PaymentService depends on UserService. The prose doc doesn't mention this dependency. Flag for update."

In the ideal end-state, this checker becomes unnecessary for architecture docs—because the AAC is the documentation. (Still needed for API specs and README setup instructions.)

Long Term Architecture: The North Star

Here's where AAC becomes strategic, not just tactical.

Most organizations have some sense of where they want their architecture to go:

- "We want to break up the monolith into microservices"

- "We want to move from REST to gRPC"

- "We want to introduce an event-driven architecture"

- "We want to migrate from AWS to multi-cloud"

But how do you track progress? How do you know how close you are?

With AAC, you can define your Long Term Architecture (LTA) as a high-level model:

// Current architecture (auto-generated from reality)

system current {

component Monolith {

contains = [UserModule, PaymentModule, NotificationModule]

}

}

// Long Term Architecture (defined by architects)

system LTA {

component UserService {

extracted_from = Monolith.UserModule

depends_on = AuthService

}

component PaymentService {

extracted_from = Monolith.PaymentModule

depends_on = [UserService, PaymentDB]

}

component NotificationService {

extracted_from = Monolith.NotificationModule

consumes_events_from = EventBus

}

}

Now you can query the gap:

- "Which modules in the Monolith haven't been extracted yet?" →

NotificationModule - "What % of the LTA is implemented?" → 66% (2 of 3 services extracted)

- "What's the next migration step?" → Extract

NotificationService

Every sprint, you measure progress toward the LTA. Architecture migration becomes measurable, not aspirational.

The Vision: Architecture That Validates Itself

Here's the full picture:

- Architects define the architecture in AAC (boundaries, constraints, LTA)

- Auto-discovery fills in details (which services actually exist, what they depend on)

- The factory generates code based on requirements

- Checkers validate that generated code respects the architectural constraints

- Deployment updates the AAC with the new reality (service deployed, new dependency added)

- The AAC model stays current because it's derived from running systems

- Documentation is generated from the AAC (diagrams, wikis, API docs)

- LTA tracks how far current architecture is from target architecture

Architecture isn't a document that drifts. It's a definition that validates.

A Real Example: LikeC4

One implementation of Architecture as Code is LikeC4 (OSS):

- Structured DSL for defining components, relationships, boundaries

- Interactive diagrams that render from the

.c4files - MCP server for AI agent queries ("Which services depend on X?")

- React/web components for embedding views in dashboards/wikis

- Version-controlled

.c4files alongside code

Example .c4 file:

model {

customer = person "Customer" {

description "User of the system"

}

spa = component "Web SPA" {

technology "React"

-> api "makes API calls to"

}

api = component "API Gateway" {

technology "Node.js"

-> userService "routes user requests to"

-> paymentService "routes payment requests to"

}

userService = component "User Service" {

technology "Node.js"

#critical

-> userDB "stores user data in"

}

paymentService = component "Payment Service" {

technology "Java"

#critical

-> paymentDB "stores payment data in"

-> userService "fetches user details from"

-> messageBus "publishes payment events to"

}

messageBus = component "Event Bus" {

technology "RabbitMQ"

}

}

From this single definition:

- Generate component diagram

- Generate deployment view

- Generate dependency graph

- Query: "What depends on userService?" →

[api, paymentService] - Query: "Which services are #critical?" →

[userService, paymentService] - Validate: "Does paymentService's code actually import only these dependencies?"

This is architecture that can be queried, visualized, and validated.

What This Means for the Factory

With AAC integrated into the factory:

Before (without AAC):

- Architectural Consistency Checker relies on LLM interpreting prose

- Slow, expensive, ambiguous

- High false positive rate (docs drift)

- Trust erodes over time

After (with AAC):

- Architectural Consistency Checker diffs structured definitions against actual code

- Fast, cheap, deterministic

- Low false positive rate (AAC stays current)

- Trust builds over time

Five other checkers become more powerful:

- Requirement Traceability: Cross-system visibility

- Escaped Defect Analyzer: Unified correlation

- Chaos Resilience: Targeted experiments

- Documentation Freshness: Generated docs

- Factory Self-Assessment: Dimensional correlation

And you get new capabilities:

- LTA tracking (measure progress toward target architecture)

- Temporal analysis (when did this dependency get added?)

- Natural language queries ("Show me blast radius of changing User API")

- Multi-dimensional visualization (component view, deployment view, security view)

Architecture becomes a first-class citizen in the factory, not an afterthought.

The Integration Point

Here's how AAC fits into the pipeline:

-

Schema Validation (#1) now includes AAC validation

- Are the

.c4files syntactically correct? - Do component names match actual services?

- Are the

-

Architectural Consistency (#10) becomes hybrid

- Programmatic: diff AAC against code imports/dependencies (fast, cheap)

- LLM: check behavioral patterns beyond structure (only when needed)

-

Post-Deployment Health (#12) annotates results against AAC

- "UserService health check failed → Query AAC → See it depends on AuthService and UserDB → Check both"

-

Escaped Defect Analyzer (#14) uses AAC for correlation

- "Bug in component X → Query AAC → See dependencies → Check metrics for all related components"

-

Chaos Resilience (#18) uses AAC for experiment design

- LLM reads AAC model, designs targeted chaos scenarios

-

Factory Self-Assessment (#20) uses AAC for dimensional analysis

- "Show me #critical services with rising costs AND declining coverage"

The AAC model is the connective tissue that makes all the checkers smarter.

The Reality Check

Let's be honest about what this requires:

If you already have architecture documentation:

- Someone needs to translate it into AAC (1-2 weeks for initial model)

- Ongoing: update AAC when architecture changes (part of normal development)

If you're starting from scratch:

- Define the AAC model as you build (parallel to defining APIs and data models)

- Start small: define service boundaries first, add details over time

If you have a large existing system:

- Use auto-discovery tools to bootstrap the model from reality

- Refine the auto-generated model with constraints and intent

- Validate over time

Is it more work than not having AAC? Yes.

Is it less work than maintaining wikis, diagrams, and prose docs that constantly drift? Absolutely.

And the payoff: Checker #10 shifts from 100% LLM to 80% programmatic. Five other checkers become dramatically more powerful. Architecture becomes queryable, visualizable, and self-validating.

This is the fourth meta-language. And it makes the other three more powerful.

What's Next

We've covered the full stack:

- The problem (best practices we abandoned)

- The solution (machines do the boring work)

- The architecture (20 checkers in 4 tiers)

- The maturity (managed → optimizing progression)

- The metrics (what to measure)

- The observability (how to capture data)

- The self-healing (how to improve continuously)

- The tooling (70% off-the-shelf)

- The architecture (AAC as the fourth meta-language)

One question remains: What does this mean strategically?

How should engineering leaders think about AI tooling? What separates "AI autocomplete" from "AI factory"? Where is the industry heading? And what should you do differently?

In the final post, we'll step back and look at the bigger picture: rethinking your AI tooling strategy in light of everything we've covered.

Because the question isn't just "can I build this?" The question is "should I build this, and if so, what changes about how I think about AI in software development?"

Next in the series: Rethinking Your AI Tooling Strategy — The sophistication spectrum, what to ask your AI tools, the cost curve shift, and what engineering leaders should demand from AI systems in 2026 and beyond.

Fully Functional Factory

Part 9 of 10View all posts in this series

- 1.The Best Practices We Abandoned

- 2.Why Machines Don't Get Bored

- 3.The Anatomy of a Self-Checking System

- 4.The Maturity Ladder: Why Organizations Get Stuck

- 5.Measuring What Matters: The Metrics You've Always Wanted

- 6.The Observability Foundation: Watching the Factory Work

- 7.Self-Healing Pipelines: Closing the Loop

- 8.Standing on Giants: The Composable Stack

- 9.Architecture as Code: The Living Architecture

- 10.Rethinking Your AI Tooling Strategy