Part 8 of 10 in Fully Functional Factory

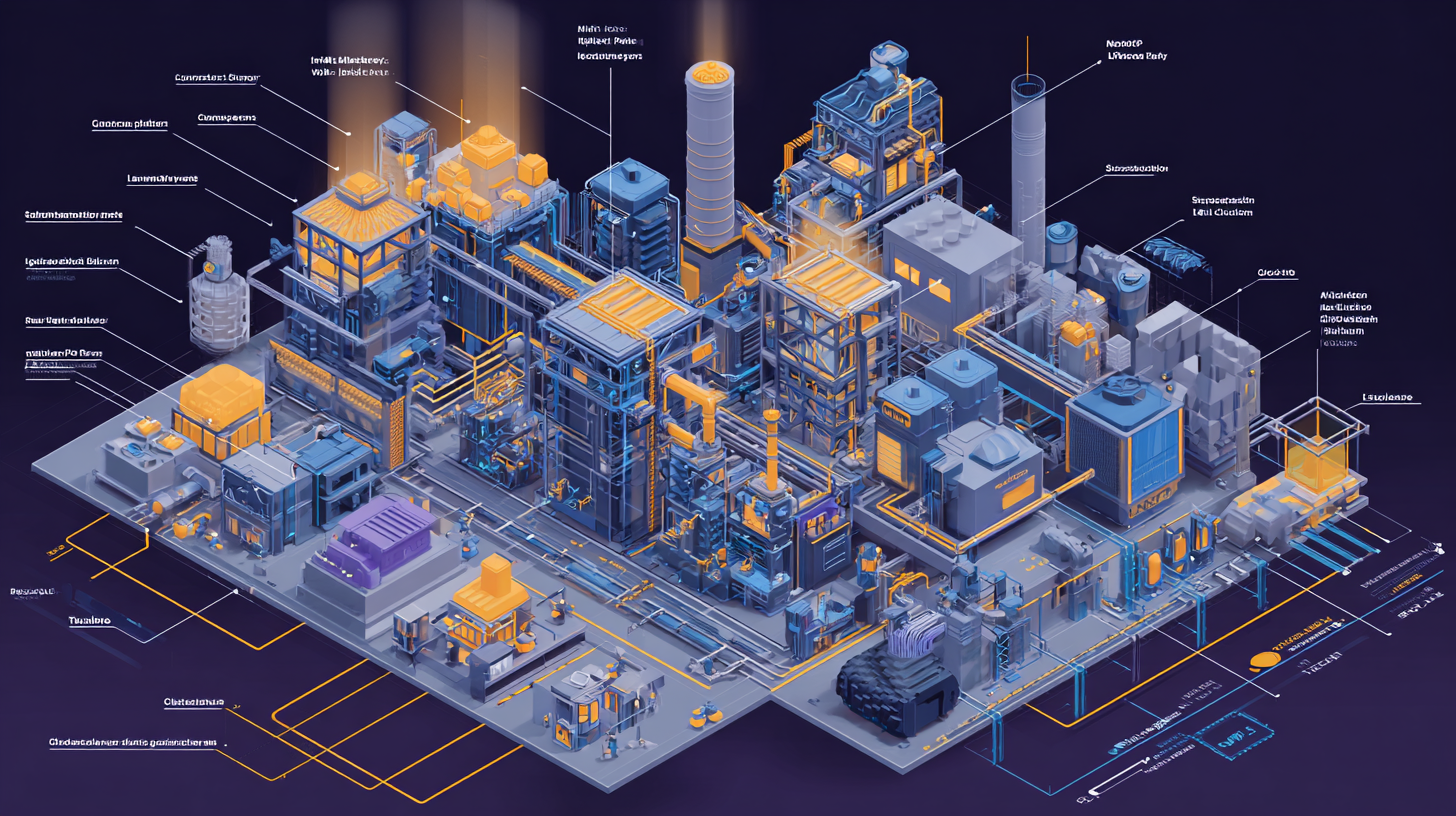

Standing on Giants: The Composable Stack

We've covered a lot of ground. Twenty checkers. Self-healing pipelines. Observability infrastructure. Root cause analysis. Chaos engineering.

You might be thinking: "This sounds like years of engineering work to build from scratch."

Here's the revelation: You don't build it from scratch. You compose it from existing pieces.

In one real-world implementation, combining open-source and commercial tools, approximately 70% of the checker system was built with off-the-shelf tooling. The remaining 30% was custom integration and domain-specific logic.

The wheels are already built. You just need to teach machines to turn them.

The Composability Principle

Here's the architectural insight that changes everything:

Separate concerns into independent layers. Make each layer swappable. Wire them together with standard interfaces.

This isn't new. It's how Unix tools work. It's how microservices work. It's how cloud infrastructure works.

The same principle applies to your factory:

Layer 1: Schema Validation

Purpose: Validate that your definitions are correct before any code generation begins

Off-the-shelf:

- Smithy CLI — AWS's open-source tool for validating, building, and diffing API definitions

- Standard language compilers (tsc, javac, rustc) for generated code

Custom:

- Validators for proprietary meta-languages (if you have them)

- Cross-reference checks between different definition types

Why this layer exists: If your definitions are wrong, everything downstream is wrong. Catch it early.

Layer 2: Security Scanning

Purpose: Find vulnerabilities in code and dependencies

Off-the-shelf:

- Semgrep — Fast pattern-matching SAST with custom rules (OSS)

- Trivy — Container, filesystem, and dependency vulnerability scanner (OSS)

- TruffleHog — Secret detection in code and commits (OSS)

Custom:

- LLM-based logic-level security analysis for business logic

Why this layer exists: Security can't be retrofitted. Build it into the pipeline.

Layer 3: Code Quality

Purpose: Enforce style, conventions, and best practices

Off-the-shelf:

- ESLint + Prettier — JavaScript/TypeScript linting and formatting (OSS)

- Ruff — Python linting and formatting, extremely fast (OSS)

- Biome — All-in-one JS/TS formatter/linter (OSS)

Custom:

- None. Linting is a solved problem. Use existing tools.

Why this layer exists: Consistency reduces cognitive load and prevents style drift.

Layer 4: Testing & Coverage

Purpose: Verify code behavior and measure how much is tested

Off-the-shelf:

- Istanbul/nyc — JavaScript/TypeScript coverage (OSS)

- JaCoCo — Java coverage (OSS)

- coverage.py — Python coverage (OSS)

- Codecov — Coverage aggregation and enforcement (Free for OSS)

Custom:

- LLM test generation for uncovered paths (only when coverage falls below threshold)

Why this layer exists: Untested code is untrustworthy code.

Layer 5: Post-Deployment Validation

Purpose: Verify the deployed service actually works

Off-the-shelf:

- Schemathesis — Auto-generates API tests from OpenAPI/GraphQL specs (OSS)

- k6 — Load testing and functional API checks (OSS)

- Keploy — Records real traffic and replays as regression tests (OSS)

- Pact — Contract testing framework (OSS)

Custom:

- Integration with your deployment pipeline

Why this layer exists: "Tests pass locally" ≠ "works in production"

Layer 6: Observability & Metrics

Purpose: Instrument the pipeline and visualize performance

Off-the-shelf:

- OpenTelemetry — Instrumentation SDK, vendor-neutral (OSS, CNCF)

- Prometheus — Time-series metrics database (OSS, CNCF)

- Grafana — Visualization and dashboarding (OSS + Commercial)

- Langfuse — LLM observability and prompt tracking (OSS)

- LiteLLM — LLM cost tracking and budget control (OSS)

Custom:

- Dashboard templates specific to your factory's checkers

Why this layer exists: Can't improve what you don't measure.

Layer 7: Resilience & Chaos

Purpose: Validate the system handles failures gracefully

Off-the-shelf:

- LitmusChaos — Kubernetes chaos engineering (OSS, CNCF)

- Chaos Mesh — Kubernetes chaos with diverse fault scenarios (OSS, CNCF)

- ToxiProxy — Network condition simulation (OSS)

Custom:

- LLM-based experiment design from architecture documents

Why this layer exists: Production will fail. Better to find out on your terms.

Layer 8: Compliance & Audit

Purpose: Generate evidence that your process was followed

Off-the-shelf:

- in-toto — Software supply chain integrity (OSS, CNCF)

- Sigstore/Cosign — Artifact signing and verification (OSS)

Custom:

- Aggregation logic to link code → requirement → decision

Why this layer exists: Regulated industries need audit trails. Everyone else benefits from traceability.

The 70/30 Split

Here's the breakdown from one real implementation:

Off-the-Shelf (70%):

- Schema validation: Smithy CLI, standard compilers

- Security: Semgrep, Trivy, TruffleHog

- Code quality: ESLint, Prettier, Ruff

- Testing: Istanbul, JaCoCo, coverage.py, Codecov

- Post-deployment: Schemathesis, k6, Keploy, Pact

- Observability: OpenTelemetry, Prometheus, Grafana

- LLM tooling: Langfuse, LiteLLM

- Chaos: LitmusChaos

- Compliance: in-toto, Sigstore

Custom (30%):

- Proprietary meta-language validators (if applicable)

- LLM-based checkers (#9, #10, #14, #16, #20):

- Requirement traceability

- Architectural consistency

- Escaped defect analysis

- Documentation freshness

- Factory self-assessment

- Pipeline orchestration (wiring tools together)

- Integration with ticketing/project management systems

The majority of the work is integration, not invention.

A Concrete Tool Map

Let's map the 20 checkers from Post 3 to specific tools:

| Checker | Tool(s) | License | Custom? |

|---|---|---|---|

| #1 Schema Validation | Smithy CLI, tsc/javac | OSS | Partial |

| #2 Pipeline Metrics | OpenTelemetry, Prometheus | OSS | Config |

| #3 Contract Compatibility | Smithy Diff, Pact | OSS | Partial |

| #4 Generated Code Integrity | Standard compilers | OSS | Config |

| #5 Build & Compile | tsc, javac, rustc, etc. | OSS | Config |

| #6 Test Coverage Gate | Istanbul/JaCoCo + Codecov | OSS | Hybrid |

| #7 Style & Convention | ESLint, Prettier, Ruff | OSS | Config |

| #8 Security Scanner | Semgrep, Trivy, TruffleHog | OSS | Hybrid |

| #9 Requirement Traceability | LLM + Langfuse | OSS/Custom | Custom |

| #10 Architectural Consistency | ArchUnit, LLM | OSS/Custom | Custom |

| #11 Cost Guard | LiteLLM or Helicone | OSS | Config |

| #12 Post-Deployment Health | Schemathesis, k6 | OSS | Config |

| #13 Regression Detector | Keploy, Pact | OSS | Config |

| #14 Escaped Defect Analyzer | LLM + Langfuse | OSS/Custom | Custom |

| #15 Prompt Effectiveness | Langfuse or LangSmith | OSS/Commercial | Hybrid |

| #16 Documentation Freshness | LLM + Smithy CLI | OSS/Custom | Custom |

| #17 Performance Benchmark | k6, Locust | OSS | Config |

| #18 Chaos Resilience | LitmusChaos, Chaos Mesh | OSS | Hybrid |

| #19 Compliance & Audit | in-toto, Sigstore | OSS | Config |

| #20 Factory Self-Assessment | Grafana + LLM | OSS/Custom | Custom |

Summary:

- Fully off-the-shelf with config: 12 checkers (60%)

- Hybrid (OSS + light custom): 3 checkers (15%)

- Custom LLM logic required: 5 checkers (25%)

The foundation is already built. The custom work is domain-specific judgment.

Why This Wasn't Possible 5 Years Ago

Here's the important historical context: This convergence is recent.

2019:

- OpenTelemetry didn't exist (formed 2019, matured 2021)

- Smithy was internal to AWS (open-sourced 2020)

- Semgrep was just launched (2019)

- LangChain didn't exist (2022)

- Claude and GPT-4-class models didn't exist (2023-2024)

- Schemathesis was early-stage (2019)

- LitmusChaos was nascent (2019, matured 2020-2021)

2021:

- The CNCF observability stack matured (OTel, Prometheus, Grafana)

- Chaos engineering tools moved from Netflix-internal to OSS-standard

- SAST tools became fast enough for CI/CD (Semgrep <10s scans)

2023-2024:

- Frontier LLMs became capable enough for code reasoning

- Token costs dropped 10x (GPT-3.5 → GPT-4 Turbo → Claude 3.5)

- LLM observability tools emerged (Langfuse, LangSmith)

- Prompt engineering became a discipline

2026 (Now):

- All the building blocks exist

- All the tools are mature

- All the patterns are documented

- The ecosystem is ready

This wasn't possible to build 5 years ago because the ecosystem wasn't ready. It's possible now because everything converged at once.

The Composability Advantage

Here's why building on existing tools beats building from scratch:

1. Swap Without Rewrite

Don't like Semgrep? Swap in CodeQL. Don't like Prometheus? Use Datadog. Don't like k6? Use Locust.

Each layer has standard interfaces:

- Security scanners output SARIF format

- Coverage tools output standardized reports

- Observability uses OpenTelemetry protocol

- Chaos tools target Kubernetes APIs

Change one tool without touching the rest of the pipeline.

2. Scale Incrementally

Start with the foundation (Tier 1: checkers #1-4). Add quality gates when you need them (Tier 2: #5-8). Add intelligence when you're ready (Tier 3: #9-13). Add optimization when it matters (Tier 4: #14-20).

You don't need all 20 checkers on day one.

A minimal viable factory might run:

- Schema Validation (#1)

- Build & Compile (#5)

- Style (#7)

- Security Scanner (#8)

- Pipeline Metrics (#2)

That's 5 checkers, all off-the-shelf, deployable in a weekend.

3. Optimize Cost vs. Depth

Some checkers are free and fast (ESLint: <1 second). Some are expensive but thorough (CodeQL: minutes, deep data-flow analysis).

You decide the trade-off based on your needs:

- Early-stage startup: Fast and free (Semgrep, Ruff, Istanbul)

- Enterprise with compliance needs: Thorough and paid (CodeQL, Snyk, Drata)

- Hybrid: Free for most code, paid for critical paths

Composability means you can mix and match.

4. Leverage Community Improvements

When Semgrep adds a new security rule, you get it automatically. When Schemathesis improves fuzzing, you benefit immediately. When Grafana releases a new dashboard template, you can import it.

You're not maintaining the tools. The community is.

The Honest Assessment: What's Still Custom

Let's be clear about the 30% that isn't off-the-shelf:

1. Domain-Specific Validation

If you have proprietary definition languages (like Capacitor or Flux in the example), you need custom validators. Smithy CLI's architecture is a good reference, but you're writing the validators yourself.

Time investment: 2-3 weeks per meta-language for basic validation, ongoing maintenance for new features.

2. LLM Judgment Layers

Five checkers require LLM reasoning:

- Requirement Traceability (#9): "Does the code implement the requirements?"

- Architectural Consistency (#10): "Does the code follow architectural patterns?"

- Escaped Defect Analyzer (#14): "Why did this bug escape? What should we fix?"

- Documentation Freshness (#16): "Does the API documentation (OpenAPI) and README match the actual code?"

- Factory Self-Assessment (#20): "How is the factory performing? What should improve?"

These are genuinely custom. You're writing prompts, handling LLM API calls, parsing responses, and feeding results back into the factory.

Time investment: 1-2 weeks per checker for initial implementation, ongoing prompt tuning.

3. Orchestration Logic

The tools exist, but you need to wire them together:

- When does each checker run?

- What happens if a checker fails?

- How do results get aggregated?

- Where do bug tickets go?

This is pipeline-as-code. GitHub Actions, GitLab CI, Jenkins, or custom orchestration.

Time investment: 1-2 weeks for basic pipeline, ongoing refinement.

Total custom work: 6-10 weeks of engineering for a full 20-checker factory with custom meta-languages. Less if you use off-the-shelf definition formats.

Compare that to building everything from scratch: 6-12 months.

The Economic Reality

Let's talk about what this actually costs:

Option 1: Build Everything Custom

- Time: 6-12 months (2-3 engineers)

- Cost: $300K-$600K in engineering time

- Maintenance: Ongoing (every tool you built needs updates)

- Risk: High (building security scanners, observability platforms, chaos tools from scratch is hard)

Option 2: Use Commercial All-In-One

- Time: 2-4 weeks (integration time)

- Cost: $50K-$200K/year in subscriptions (Datadog + Snyk + PagerDuty + etc.)

- Maintenance: Low (vendors handle it)

- Risk: Low (proven tools)

- Flexibility: Low (locked into vendor ecosystem)

Option 3: Compose OSS + Selective Commercial

- Time: 4-10 weeks (integration + custom checkers)

- Cost: $0-$50K/year (free tier or self-hosted for most, commercial for specialized needs)

- Maintenance: Medium (community handles tools, you handle integration)

- Risk: Medium (OSS tools are mature but require expertise)

- Flexibility: High (swap any piece)

For most teams, Option 3 is the sweet spot.

A Starter Stack

If you're starting from zero, here's a minimal stack that covers the foundation:

Core Pipeline:

- Schema validation: Smithy CLI or OpenAPI tools

- Build: Standard compilers

- Tests: Standard test runners + coverage tools

- Security: Semgrep (free)

- Style: ESLint + Prettier or Ruff (free)

Observability:

- Instrumentation: OpenTelemetry (free)

- Storage: Prometheus (free, self-hosted)

- Visualization: Grafana (free tier or self-hosted)

Deployment:

- Health checks: Schemathesis (free)

- Monitoring: Grafana synthetic checks (free tier)

Total cost: $0-$50/month (VM for Prometheus + Grafana)

Time to set up: 1-2 weeks

Checkers covered: 8 of 20 (the critical ones)

From there, you add:

- LLM cost tracking (LiteLLM)

- Chaos engineering (LitmusChaos)

- Advanced security (Trivy, TruffleHog)

- LLM judgment checkers (custom prompts)

Incremental growth as you need it.

The Meta-Lesson

Here's the broader insight:

Best practices have always existed. The tools have always existed. What was missing was the orchestration layer that runs them consistently.

Humans knew to:

- Validate schemas before generating code

- Run security scanners before deploying

- Check test coverage before merging

- Monitor production after deploying

We just couldn't sustain the discipline of running all these tools, every time, without shortcuts.

The factory isn't inventing new tools. It's orchestrating existing tools with machine-level consistency.

And because the tools are composable, you can:

- Start small (5 checkers)

- Grow incrementally (add 1-2 checkers per sprint)

- Swap tools (replace Semgrep with CodeQL if needed)

- Mix free and paid (optimize cost vs. capability)

You're not building a factory from scratch. You're assembling one from mature, battle-tested components.

What's Next

We've talked about 19 of the 20 checkers. There's one we've referenced but not yet fully explored:

Checker #10: Architectural Consistency

This checker compares generated code against architectural design decisions. It checks whether the code respects service boundaries, follows prescribed patterns, and matches the data flow described in the architecture.

The challenge: Most architecture documentation is prose. Prose is ambiguous. LLMs can interpret it, but slowly and inconsistently.

The solution: What if your architecture wasn't documentation—but a machine-readable, queryable, always-current definition?

In the next post, we'll dive into Architecture as Code—the fourth meta-language that makes Checker #10 (and several others) dramatically more powerful. It's the piece that shifts architectural consistency from "LLM interprets prose" to "diff structured definitions against actual code."

Because if your architecture is defined in code, the factory can validate against it. Automatically. Every time.

Next in the series: Architecture as Code: The Living Architecture — Machine-readable, queryable, multi-dimensional, temporal architecture that validates itself. The fourth definition that makes the other three more powerful.

Fully Functional Factory

Part 8 of 10View all posts in this series

- 1.The Best Practices We Abandoned

- 2.Why Machines Don't Get Bored

- 3.The Anatomy of a Self-Checking System

- 4.The Maturity Ladder: Why Organizations Get Stuck

- 5.Measuring What Matters: The Metrics You've Always Wanted

- 6.The Observability Foundation: Watching the Factory Work

- 7.Self-Healing Pipelines: Closing the Loop

- 8.Standing on Giants: The Composable Stack

- 9.Architecture as Code: The Living Architecture

- 10.Rethinking Your AI Tooling Strategy