Part 3 of 10 in Fully Functional Factory

The Anatomy of a Self-Checking System

We've established that machines are better suited than humans for Brittle, Boring, and Buggy work. The economics have flipped. The performance is there. The consistency is guaranteed.

Now comes the practical question: how do you actually build this?

The LLM-Only Trap

Here's what doesn't work: throwing LLMs at everything and hoping for the best.

In the early days of AI-assisted development, the workflow looked like this:

- Prompt an LLM to generate code

- Run the code

- See it fail

- Prompt again with the error

- Repeat until it works (or you give up)

This approach has three fatal flaws:

It's expensive. Every iteration burns tokens. When the LLM is fixing linting issues, regenerating tests, or debugging import errors, you're paying frontier-model prices for work that could be done deterministically for free.

It's inconsistent. Ask an LLM to "write tests" three times, and you'll get three different test structures, three different assertion styles, and three different levels of coverage. There's no guarantee the second attempt is better than the first—it's just different.

It has no memory. LLMs don't learn from their mistakes within your project. If the LLM forgets to handle null checks in Feature 1, it will forget again in Feature 2. There's no accumulated wisdom, no pattern recognition, no architectural taste.

The promise of AI-assisted development was speed and automation. The reality of LLM-only approaches is slow, expensive, and inconsistent.

The Insight: Separate Creation from Validation

Here's the key architectural decision that changes everything:

Don't ask the LLM to check its own work. Build a pipeline that does it automatically.

Think about how traditional software is built:

- You write code (creative work)

- The linter checks it (deterministic validation)

- Tests run (deterministic validation)

- Linters enforce style (deterministic validation)

- Security scanners find vulnerabilities (mostly deterministic validation)

- Humans review for logic and design (judgment-based validation)

This separation of concerns works because each layer uses the right tool for the job.

Now apply that same principle to AI-generated code:

- Humans create vision, meta models with the help of LLMs (creative work)

- Generators create the boilerplate (programmatic generative work)

- LLM generates business logic (generative work)

- Linters check syntax (deterministic validation)

- Tests check behavior (deterministic validation)

- Security scanners check for vulnerabilities (deterministic validation)

- Another LLM checks qualitative and architectural consistency (judgment-based validation)

The LLM doesn't need to be good at everything.

The Four Tiers of Checking

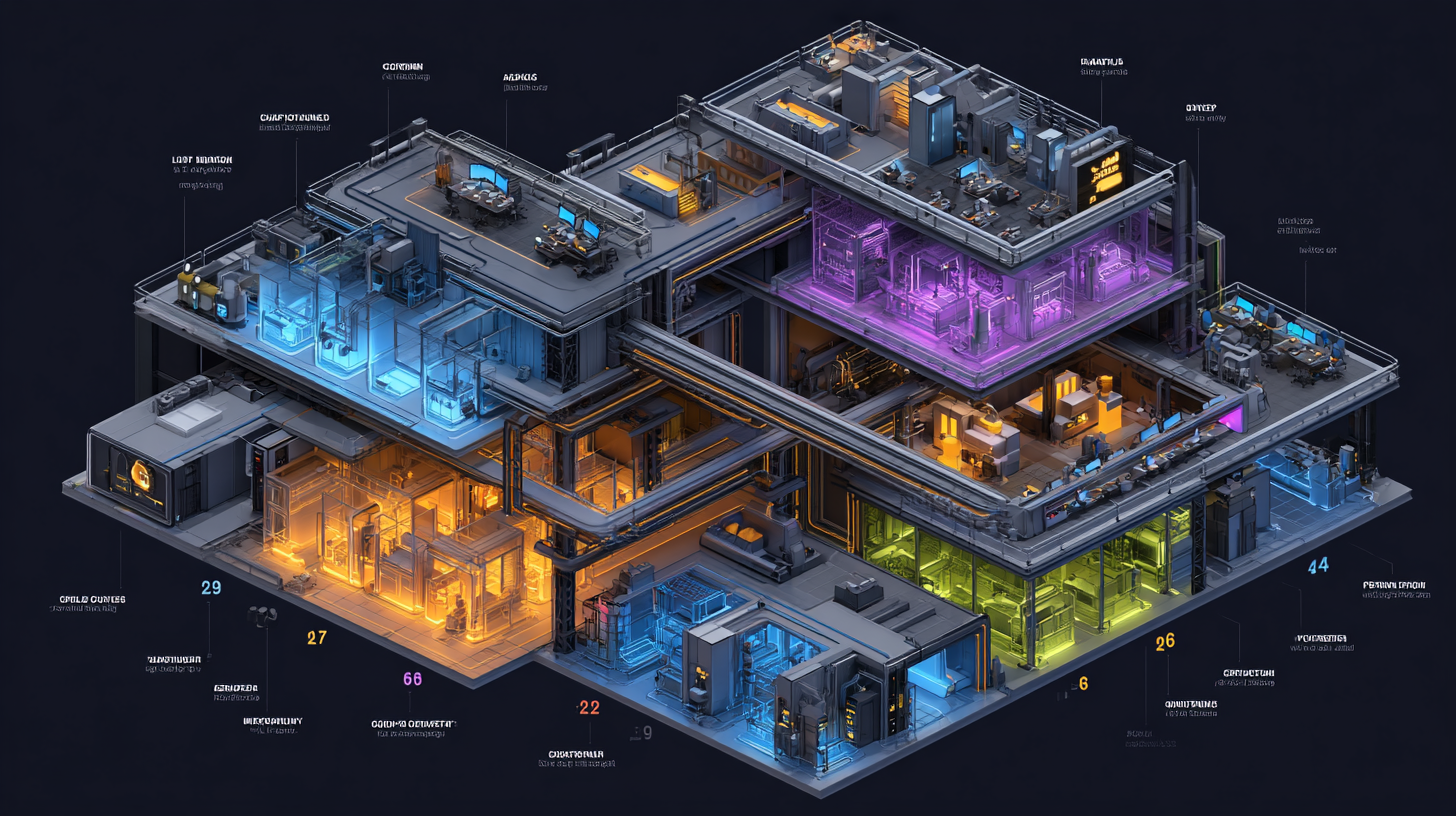

A well-designed factory organizes its quality gates into four tiers, ordered by when they run and what they protect against:

Tier 1: Foundation (You Need These Before Anything Else)

These are the checkers that prevent cascade failures—if these fail, everything downstream is broken, so catch problems here first:

1. Schema Validation (Programmatic, zero tokens)

- Validates that your API definitions, data models, and UI component specs are syntactically correct and internally consistent

- This is your single point of failure: if the definitions are wrong, all generated code is wrong

- Example tools: Smithy CLI for API validation, custom validators for proprietary meta-languages

2. Pipeline Metrics Collector (Programmatic, zero tokens)

- Records timestamps, token usage, pass/fail status at every stage

- Pure observation—doesn't block, just measures

- This is your prerequisite for improvement: you can't optimize what you don't measure

- Example tools: OpenTelemetry for instrumentation, Prometheus for storage, Grafana for visualization

3. Contract Compatibility (Programmatic, zero tokens)

- When definitions change, diffs old vs. new and flags breaking changes

- Prevents deploying changes that break existing consumers

- Recommends semantic version bumps (major/minor/patch)

- Example tools: Smithy Diff, Pact for contract testing, openapi-diff

4. Generated Code Integrity (Programmatic, zero tokens)

- Verifies that deterministic generators produced compilable, correct output before LLMs touch it

- If generators are broken, catch it before the LLM starts hallucinating around the problems

- Example tools: Language compilers (tsc, javac, rustc), ts-morph for AST validation

All four are programmatic. All four are zero-token. All four run in seconds.

Tier 2: Quality Gates (Harden What's Already Working)

These are the checkers that enforce baseline quality standards—the stuff humans sometimes run and always intended to do but often skipped:

5. Build & Compile (Programmatic, zero tokens)

- Compiles the full project (generated boilerplate + LLM-written business logic)

- Reports exact error locations

- Blocks pipeline on failure

- This is just running your standard compiler—nothing custom needed

6. Test Coverage Gate (Hybrid, minimal tokens)

- Programmatic: Runs tests, measures coverage, checks against threshold (e.g., 80%)

- LLM (only if below threshold): Analyzes uncovered paths and generates additional tests

- Most of the time, this is zero-token. LLM only kicks in when coverage is insufficient.

- Example tools: Istanbul/nyc (JS/TS), JaCoCo (Java), coverage.py (Python), Codecov for enforcement

7. Style & Convention (Programmatic, zero tokens)

- Runs linters and formatters with your standard config

- Auto-fixes what it can, reports what it can't

- Remove the LLM entirely. Linting is a solved, deterministic problem.

- Example tools: ESLint + Prettier (JS/TS), Ruff (Python), Biome, SonarQube

8. Security Scanner (Hybrid, minimal tokens)

- Programmatic: Runs SAST tools (Semgrep, Bandit), dependency scanners (Trivy, npm audit), secret detection (TruffleHog)

- LLM (targeted): Analyzes only the LLM-generated business logic for logic-level flaws that pattern-matching can't catch (authorization bypass, IDOR, business logic manipulation)

- The programmatic layer catches 95% of issues. LLM handles the edge cases.

- Example tools: Semgrep for custom rules, Trivy for dependencies, TruffleHog for secrets

Three of four are fully programmatic. One is hybrid with programmatic-first.

Tier 3: Intelligence Layer (Make the Factory Smarter)

These are the checkers that require judgment, reasoning, or contextual understanding—this is where LLMs add value that humans can't easily automate:

9. Requirement Traceability (LLM)

- Reads the original requirement, the ticket, and the generated code

- Verifies that every requirement is actually addressed by the implementation

- Flags requirements with no corresponding code path

- This is custom LLM work—no off-the-shelf tool reads prose and compares it to code semantically

10. Architectural Consistency (LLM, but can be partially programmatic with structured architecture)

- Compares generated code against architectural design decisions

- Checks: Does it use the prescribed patterns? Respect service boundaries? Follow the data flow?

- This is the "architectural taste" that LLM-only approaches lack

- Hybrid approach: Use ArchUnit/Dependency-Cruiser for structural rules programmatically, LLM for behavioral patterns

11. Cost Guard (Programmatic, zero tokens)

- Tracks token spend per feature, per run

- Kills runaway agents burning tokens without converging

- Enables measuring rework rate (how often LLM output gets rejected)

- Example tools: LiteLLM or Helicone for unified tracking and budget enforcement

12. Post-Deployment Health (Programmatic, zero tokens)

- Auto-generates integration tests from API specs

- Hits every endpoint with valid/invalid inputs immediately after deploy

- Checks response codes, schemas, and latency

- Creates bug tickets for failures

- Example tools: Schemathesis (auto-generates tests from OpenAPI), k6 for load/health checks

13. Regression Detector (Programmatic, zero tokens)

- Replays production requests against the new deployment

- Flags endpoints that return different results for the same inputs

- Ensures new features don't break old functionality

- Example tools: Keploy (records real traffic and replays it), Pact for contract tests, Diffy for response diffing

Two require full LLM reasoning. Three are programmatic infrastructure.

Tier 4: Continuous Improvement (The Factory Learns)

These are the checkers that make the factory self-improving—they analyze the factory's own performance and recommend improvements:

14. Escaped Defect Analyzer (LLM)

- When a bug escapes to production, performs root cause analysis

- Traces back through every checker and identifies which one should have caught it and why it didn't

- Recommends improvements to prompts, thresholds, or test strategies

- This is what turns your factory from a static pipeline into a learning system

15. Prompt Effectiveness Tracker (Hybrid)

- Programmatic: Records which prompts were used, retry counts, first-pass success rates

- LLM (periodic): Analyzes patterns to identify prompts that consistently fail, suggests rewrites

- Your prompts are critical assets—knowing which ones work is essential for optimization

- Example tools: Langfuse or LangSmith for prompt versioning and analytics

16. Documentation Freshness (Hybrid)

- Programmatic: Detects drift between code and documentation (OpenAPI specs vs. actual endpoints, README setup instructions vs. actual scripts, dependencies list vs. package.json)

- LLM: Generates suggested updates to keep docs current (API descriptions, README how-to-run sections, dependency quirks and gotchas)

- Ensures OpenAPI/Swagger stays synchronized, README.md is accurate and complete for new developers

- Prevents documentation rot that makes software difficult to understand and run

17. Performance Benchmark (Programmatic, zero tokens)

- Runs load tests against deployed services

- Measures response times, throughput, and resource utilization under load

- Compares against defined SLOs

- Example tools: k6 (integrates with Grafana), Locust, Gatling

18. Chaos Resilience (Hybrid)

- LLM: Reads architecture docs to identify failure scenarios (DB outage, service timeout, network partition)

- Programmatic: Executes chaos experiments using fault injection tools

- Validates that factory output is resilient, not just correct

- Example tools: LitmusChaos or Chaos Mesh for Kubernetes environments

19. Compliance & Audit Trail (Programmatic, zero tokens)

- Aggregates all checker results, agent decisions, and human feedback

- Links every line of deployed code back to its originating requirement and architecture decision

- Creates evidence artifacts for compliance (CMMI, SOC 2, regulated industries)

- Example tools: in-toto for supply chain integrity, Sigstore for artifact signing

20. Factory Self-Assessment (LLM)

- Reviews aggregated metrics across recent runs

- Identifies trends (rising costs, recurring failures, degrading performance)

- Generates "Factory Health Report" with prioritized improvement recommendations

- This is the checker that checks all the other checkers

Worth mentioning: Teams might add context-specific checkers like Developer Setup Validation (programmatic: actually runs the README setup steps in a clean container to verify new developers can onboard), Environment Variable Completeness (validates all required env vars are documented with examples), or Error Message Clarity & Consistency (hybrid: checks that exceptions include actionable guidance and follow consistent format/structure/tone across the codebase). The 20 above cover the universal needs; you may have domain-specific ones.

Two require full LLM analysis. Three are hybrid. Three are fully programmatic.

The Token Economics in Practice

Let's add it up. In one possible implementation of this 20-checker system:

- 12 checkers are fully programmatic (zero tokens): #1, #2, #3, #4, #5, #7, #11, #12, #13, #17, #19

- 4 checkers are hybrid (programmatic first, LLM only when needed): #6, #8, #15, #18

- 4 checkers require full LLM calls: #9, #10, #14, #20

60% of your quality pipeline is free. 20% is mostly free. Only 20% requires LLM reasoning.

For a typical feature, here's what that might cost:

| Checker Type | Count | Cost per Feature | Total |

|---|---|---|---|

| Programmatic | 12 | $0 | $0 |

| Hybrid (programmatic succeeds) | 3 | $0 | $0 |

| Hybrid (LLM needed) | 1 | $0.50 | $0.50 |

| Full LLM | 4 | $1.25 | $5.00 |

| Total per feature | 20 | $5.50 |

Compare that to:

- A production security incident: $10,000–$1,000,000

- A deployment rollback: $5,000 in engineer time

- Onboarding delays from wrong docs: $2,000 per new engineer

- Accumulated technical debt: $50,000+ over codebase lifetime

The comprehensive approach is now cheaper than the shortcuts.

The Architecture Principle

Here's the design philosophy that makes this work:

Use deterministic automation wherever possible. Use LLMs only where judgment is required.

This isn't "AI does everything." It's "AI does the creative parts. Automation does the tedious parts. Together they execute the discipline we've been failing to maintain."

The checkers that validate syntax? Deterministic. The checkers that run tests? Deterministic. The checkers that scan for known vulnerabilities? Deterministic. The checkers that measure performance? Deterministic.

The checkers that validate requirements are met? LLM. The checkers that verify architectural consistency? LLM. The checkers that analyze escaped defects? LLM.

Each layer uses the right tool for the job.

What This Enables

With this architecture in place:

- **Consistency becomes default. Every feature undergoes the same 20 checks. No exceptions. No shortcuts.

- Quality becomes measurable. The metrics collector tracks pass/fail rates, retry counts, and token costs. You see trends in real-time.

- Improvement becomes systematic. The escaped defect analyzer and prompt effectiveness tracker identify what's not working and recommend fixes.

- Confidence becomes justified. You're not hoping the code is good. You have evidence that it passed 20 independent gates.

This is what separates a script from a factory.

A script runs the same commands every time. A factory runs the same process every time, measures its effectiveness, and improves itself.

The Missing Piece

But there's still a gap.

You can build this 20-checker pipeline. You can run it on every feature. You can collect metrics and measure improvement.

But how do you know if you're actually getting better?

What's the difference between "we run 20 checks" and "we run a mature, disciplined process"?

How do you know if you're at the level of elite-performing teams, or just going through the motions?

In the next post, we'll talk about the maturity ladder—the progression from ad hoc development to measured, optimizing systems. We'll look at why most organizations get stuck at defined processes, and how AI finally makes quantitative management and continuous optimization practical.

Because running checks is good. But knowing that your process is improving, quantitatively, over time? That's what turns a factory into a competitive advantage.

Next in the series: The Maturity Ladder: Why Organizations Get Stuck — Process maturity principles and why advancement is hard for humans but natural for machines.

Fully Functional Factory

Part 3 of 10View all posts in this series

- 1.The Best Practices We Abandoned

- 2.Why Machines Don't Get Bored

- 3.The Anatomy of a Self-Checking System

- 4.The Maturity Ladder: Why Organizations Get Stuck

- 5.Measuring What Matters: The Metrics You've Always Wanted

- 6.The Observability Foundation: Watching the Factory Work

- 7.Self-Healing Pipelines: Closing the Loop

- 8.Standing on Giants: The Composable Stack

- 9.Architecture as Code: The Living Architecture

- 10.Rethinking Your AI Tooling Strategy