Part 1 of 10 in Fully Functional Factory

The Best Practices We Abandoned

You know that feeling when you're three days from a launch deadline, and someone asks, "Did we update the architecture docs?"

Of course you didn't. You were busy shipping actual features.

Or when the security scan surfaces 47 findings, but 43 are false positives, and you're already late to standup. You'll triage them later. (You won't.)

Or when the test suite has been at 62% coverage for six months, and everyone agrees it should be higher, but nobody has time to write the boring edge-case tests that will probably never happen anyway.

We've all been here. And we all know better.

The List

Every engineering organization has the list—the practices everyone agrees matter but somehow never get done consistently:

- Comprehensive test coverage — Not just the happy path, but edge cases, error handling, load testing

- Security scanning on every commit — SAST, dependency checks, secret detection, not just when compliance asks

- Architecture documentation that stays current — Not last year's Lucidchart diagram or wiki page, but living artifacts that reflect reality

- Post-deployment validation — Automated health checks, regression testing, chaos experiments

- Performance benchmarking — SLOs that are measured, not hoped for

- Code quality enforcement — Linters, formatters, complexity analysis, actually configured and followed

- Dependency management — CVE monitoring, upgrade cycles, license compliance

- Systematic code review — Not "LGTM" in 30 seconds, but thoughtful analysis

- Requirement traceability — Knowing every line of code maps back to a business need

- Causal analysis on incidents — Root cause, corrective action, process improvement—not just "hotfix and move on."

You've probably championed some of these yourself. Maybe you've been that person pushing for better process discipline, quality standards, or formalized reviews. Maybe you've written the Slack message that starts with "Hey team, we really need to start..." and then watched as good intentions dissolve under the pressure of shipping.

The Frameworks That Tried

This isn't a new problem. Entire industries have formed around helping organizations do software better:

- CMMI (Capability Maturity Model Integration) — decades of research on process maturity, with detailed practice areas and maturity levels

- DORA (DevOps Research and Assessment) — years of survey data correlating practices with performance

- ISO 9001 — quality management systems with formal documentation and continuous improvement

- ITIL — IT service management with incident response and change control

- Your company's own hard-won standards — the internal wiki page titled "Engineering Best Practices" that someone spent weeks writing

These frameworks aren't wrong. They're often quite right. CMMI's emphasis on quantitative management. Brilliant. DORA's measurement of deployment frequency and change failure rate. Essential. ISO's focus on documented, repeatable processes. Exactly what chaotic startups need.

The problem isn't the frameworks. The problem is execution.

Why We Don't Do It

Let's be honest about what happens when deadline pressure hits:

Monday morning: "We're going to do this right. TDD, comprehensive coverage, security scanning, the works."

Thursday afternoon: "We'll add those tests after launch."

Friday deploy: "Just merge it. We tested manually. It's fine."

Three months later: "Why is our technical debt so high?"

This isn't a failure of discipline. This isn't laziness. This is economics.

Here's what nobody says out loud: We didn't fail—the system was too costly and difficult for humans to maintain consistently.

The reality of human-driven best practices:

- Comprehensive testing? That's 2-3x more code to write and maintain than the feature itself

- Security scanning? Someone has to triage hundreds of findings, most of which are false positives

- Architecture docs? By the time you finish writing them, they're already out of date

- Post-deployment validation? That's a whole separate test harness to build and maintain

- Performance benchmarking? Load testing environments, synthetic traffic generation, metrics analysis—that's a team's worth of work

- Systematic code review? Every PR now takes hours, not minutes, creating a review backlog that blocks progress

- Incident analysis? That's a 4-hour blameless postmortem for every production issue, plus documentation, plus process changes, plus follow-through

The boring stuff—the repetitive, tedious, exhaustive checking—costs more than the creative work. And when you're understaffed, over-committed, and under-deadline, it's rational to skip it.

Not because you don't care. Because you're optimizing for survival.

The Consistency Problem

Even when organizations commit to best practices, humans can't maintain consistency.

The security scan runs on Monday. By Thursday, you're bypassing it because the build is broken and you need to deploy. You'll fix it next sprint. (You won't—you'll have new fires.)

The test coverage gate is set at 80%. Then a critical bug needs a hotfix, and someone merges with 45% coverage "just this once." The gate is now more of a suggestion.

The architecture review board meets every two weeks. Then three people go on vacation, and the meeting gets skipped. Then it happens again. Six months later, nobody remembers what the board was supposed to review.

This isn't because humans are bad at their jobs. It's because humans get tired, distracted, overwhelmed, and reprioritized. We take shortcuts under pressure. We forget steps. We make exceptions "just this once" that become "just the way we do things."

We're not designed for exhaustive, repetitive, never-ending process adherence. We're designed for creative problem-solving, judgment calls, and adaptation.

The system was built for machines, but operated by humans. And that mismatch is expensive.

The Hidden Cost

Here's what technical leaders don't talk about enough: the opportunity cost of skipped best practices.

You ship the feature without comprehensive tests. Six months later, a refactor breaks it, but you don't find out until production. Now you're firefighting instead of building the next thing.

You skip the security scan. A critical CVE in a dependency goes unnoticed. When it surfaces in a pentest, you spend two weeks on an emergency upgrade and re-certification.

You don't update the architecture docs. The new engineer on the team makes a reasonable decision based on outdated information, and now you have a pattern inconsistency that will confuse the next engineer.

The cost of skipping best practices isn't paid immediately. It's paid later, with interest, in the form of:

- Incidents that could have been prevented

- Bugs that escape to production

- Onboarding takes 3x longer because the documentation is wrong

- Refactors that break things because nobody understood the dependencies

- Security breaches that start from a known-vulnerable library you meant to upgrade

- Technical debt that grows faster than you can pay it down

We know this. Every retrospective surfaces it. Every post-incident review acknowledges it. Every new year's engineering goals include "improve test coverage" and "reduce technical debt."

And yet.

The Question

So here's the uncomfortable question: if we know what we should do, and we know the frameworks that help us do it, and we know the cost of not doing it...

Why don't we do it?

The traditional answers:

- "We need more discipline."

- "We need better prioritization."

- "We need to slow down and do it right."

But these answers assume the constraint is willingness. It's not. The constraint is capacity.

Humans have a finite attention budget. We can write creative code or we can write exhaustive tests, but doing both well, every time, on every feature, forever, is grinding.

Humans have a finite tolerance for tedium. The first security scan is interesting. The 50th consecutive scan where you triage the same false positives is soul-crushing.

Humans have a finite ability to maintain vigilance. The first architecture review is thoughtful. The 100th is a rubber stamp because you're exhausted, and there are six more reviews after this one.

What if the constraint wasn't human attention, but machine capacity?

What if the tedious work—the repetitive, exhaustive, never-ending process adherence—could be done by something that never gets tired, never gets bored, never cuts corners under pressure, and never forgets a step?

What if the things we've been trying to do for decades—comprehensive testing, continuous security scanning, always-current documentation, systematic quality gates, causal analysis on every defect—were suddenly economically practical?

What if best practices stopped being aspirational and started being default?

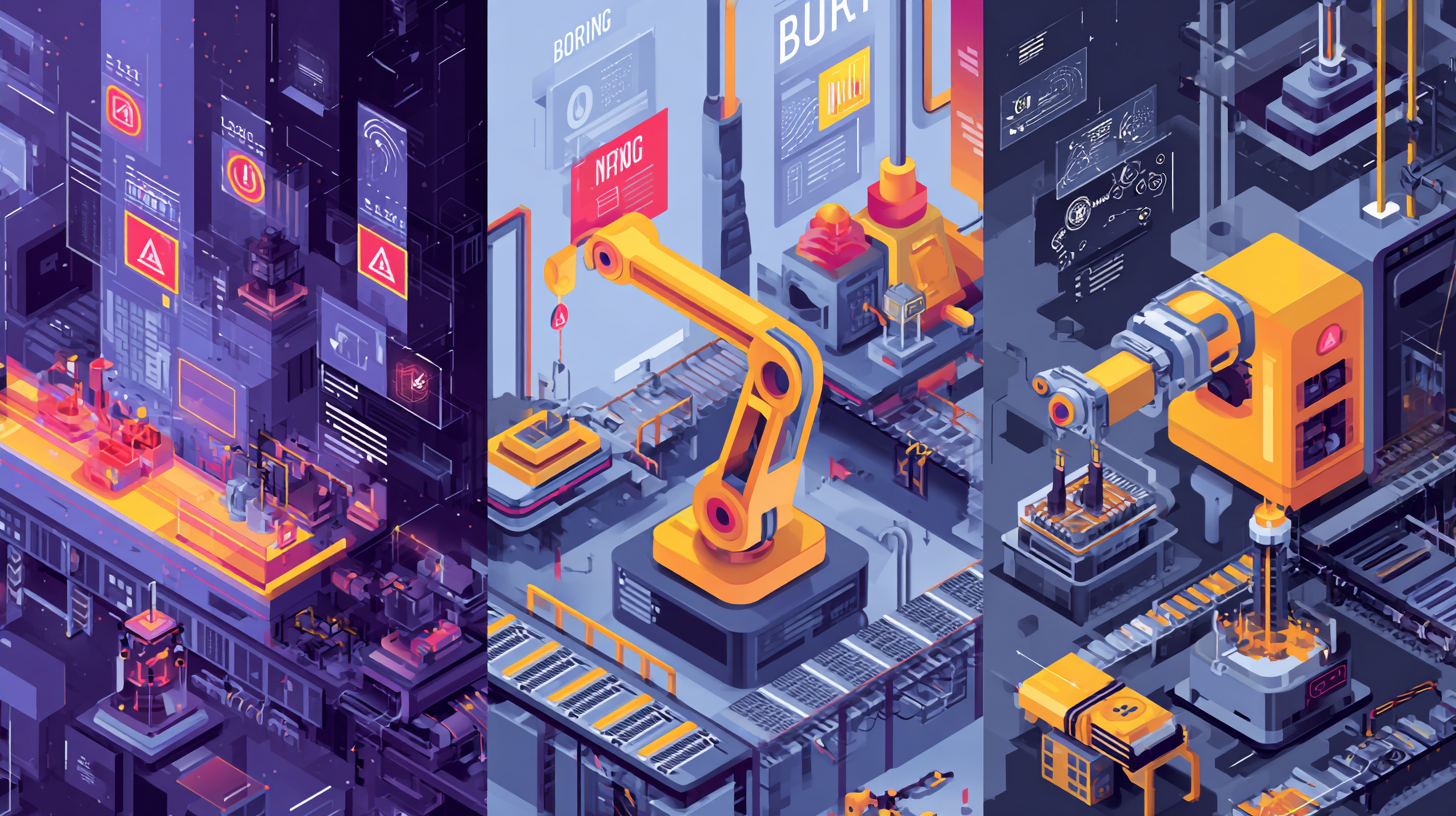

The Brittle, Boring, and Buggy

There's a reason Boston Dynamics focuses its robots on work that's Dirty, Dangerous, or Dull. Not because humans can't do those jobs—we did them for centuries—but because machines can do them better, more consistently, and without the human cost.

Software has its own version of this problem. There's a class of work that's:

- Brittle — Security-critical, precision-required, easy to break when done carelessly

- Boring — Tedious, monotonous, repetitive tasks humans skip or rush

- Buggy — Error-prone work that needs exhaustive checking, not best-effort

Linting and formatting code. Running test suites. Scanning dependencies for CVEs. Validating that architecture decisions are followed. Checking that every requirement has corresponding code. Measuring performance under load. Post-deployment health checks. Incident root cause analysis.

Humans can do this work. We just can't do it consistently, exhaustively, and forever.

And when the choice is "ship the feature" or "run the full checklist," the checklist loses. Every time.

Not because we're unprofessional. Because we're rational.

What Comes Next

For decades, the answer was "more discipline," "better tooling," or "hire more people."

But what if the answer is simpler: let machines do the machine work?

What if AI agents—the same ones we're already using to generate code—could also be used to execute the exhaustive, repetitive, boring-but-essential practices we keep abandoning?

Not because they're smarter than us. But because they don't get tired. They don't get bored. They don't take shortcuts. They don't forget.

The best practices we've been trying to implement for decades were never wrong. They were just too expensive for humans to maintain.

But machines don't care about tedium. They don't care about cost—or rather, their cost is dropping exponentially while their capability is rising.

In the next post, we'll talk about what changes when you build a software factory where best practices are the default, not the aspiration. Where comprehensive checking isn't something you hope to get to someday—it's something that happens every time, automatically, because the machines don't know how to skip steps.

Because if we're going to hand off code generation to AI, we'd better also hand off the discipline we've been failing to maintain ourselves.

Next in the series: Why Machines Don't Get Bored — The case for AI-driven quality gates and what changes when the cost of comprehensive checking drops to near-zero.

Fully Functional Factory

Part 1 of 10View all posts in this series

- 1.The Best Practices We Abandoned

- 2.Why Machines Don't Get Bored

- 3.The Anatomy of a Self-Checking System

- 4.The Maturity Ladder: Why Organizations Get Stuck

- 5.Measuring What Matters: The Metrics You've Always Wanted

- 6.The Observability Foundation: Watching the Factory Work

- 7.Self-Healing Pipelines: Closing the Loop

- 8.Standing on Giants: The Composable Stack

- 9.Architecture as Code: The Living Architecture

- 10.Rethinking Your AI Tooling Strategy