Part 4 of 10 in Fully Functional Factory

The Maturity Ladder: Why Organizations Get Stuck

You've built the 20-checker pipeline. You're running it on every feature. Code is flowing through quality gates like it never has before.

But here's the question that keeps you up at night: Are you actually getting better?

Is this just "doing more stuff," or is your process genuinely improving? Are you at the level of elite-performing teams, or are you just going through the motions with more automation?

The Universal Maturity Progression

If you've been in software for more than a few years, you've probably seen this pattern—whether or not anyone explicitly named it:

Phase 1: Chaos — Every project is different. Success depends on individual heroics. You're not sure why some projects succeed and others fail.

Phase 2: Repeatability — You've figured out some patterns that work. Not everything is documented, but the experienced people know what to do.

Phase 3: Standardization — You've written down the process. There's a playbook. New engineers can follow it. You have consistent practices across teams.

Phase 4: Measurement — You're tracking how well the process works. You have dashboards, metrics, trends. You can predict outcomes statistically.

Phase 5: Optimization — You're continuously improving the process based on data. Root cause analysis is standard. Changes are measured for effectiveness.

This isn't new. It's not some Silicon Valley innovation. It's how any complex system matures, whether you're building software, manufacturing cars, or running a hospital.

Frameworks like CMMI (Capability Maturity Model Integration) formalize this ladder with detailed practice areas and assessment criteria. But the underlying progression is universal. You've probably been climbing it—or trying to—your entire career.

Where Most Organizations Actually Live

Here's the uncomfortable truth: most engineering organizations are stuck at managed or defined processes.

Let me show you what that looks like in practice:

Level 0: Incomplete (Almost Nobody)

- No defined process

- Projects succeed or fail based on luck

- Outcomes are completely unpredictable

You're past this. If you're reading this series, your organization has some process, even if it's informal.

Level 1: Initial (Startups, Rapid Growth)

- Process exists but is ad hoc and reactive

- Success depends on individual talent and heroics

- "Whatever it takes to ship" mentality

- No consistency between projects

Many startups live here. And that's okay—early on, speed matters more than process. But you can't scale here.

Level 2: Managed (Most Mid-Size Orgs)

- Projects are planned and tracked

- Basic requirements and configuration management

- Testing and code review are standard

- Process is followed at the project level, but every project manages itself

This is where most organizations stabilize. You have standups, sprints, code reviews, CI/CD. Each team does things mostly right, most of the time.

The problem? Every team interprets "mostly right" differently.

Team A writes tests first. Team B writes them after. Team C writes them "when we have time."

Team A deploys daily. Team B deploys weekly. Team C deploys "when it's ready."

Team A documents everything in Notion. Team B uses Confluence. Team C's documentation is in someone's head.

It works. But it doesn't scale. And you can't learn from it systematically, because there's no consistent process to measure.

Level 3: Defined (Aspirational for Many)

- Standard process defined organization-wide

- Process is documented, understood, and followed consistently

- Tailoring guidelines exist (not every project needs the full process)

- Roles, responsibilities, and work products are clear

This is where most organizations want to be. You've got engineering standards, architectural review boards, defined test coverage thresholds, standardized deployment pipelines.

The new engineer doesn't need to ask "how do we do things here?"—there's a doc for that.

But here's where it gets hard.

Level 4: Quantitatively Managed (Rare)

- Process is not just defined but measured

- Statistical techniques are used to understand variation

- Performance baselines are established quantitatively

- When something deviates from the baseline, you investigate

Example: "Our factory runs 20 checks per feature. On average, 2.3 checks fail on the first pass, requiring rework. Last week, that jumped to 4.1 failures per feature. Why? Root cause: we changed a prompt, and it's producing lower-quality code."

This level requires systematic data collection on every run, every feature, every metric that matters.

For humans, this is exhausting. You need someone—or a team—dedicated to:

- Instrumenting every stage of the pipeline

- Collecting data consistently

- Aggregating it into dashboards

- Monitoring for statistical anomalies

- Investigating deviations

Most organizations try to do this. They set up Datadog or New Relic. They build Grafana dashboards. They have metrics review meetings.

But the data is incomplete. The dashboards go stale. The metrics meetings become box-checking exercises.

Humans can define quantitatively managed processes. We just can't execute them consistently.

Level 5: Optimizing (Almost Mythical)

- The process doesn't just run—it improves itself

- Root cause analysis is standard practice for defects and process problems

- Improvements are piloted, measured, and either adopted or rejected based on data

- Innovation is continuous

Example: "Bug XYZ escaped to production. Root cause analysis shows it passed Checker #8 (Security Scanner) but shouldn't have. Analysis: the SAST rules didn't cover this pattern. Action: add new Semgrep rule. Validation: re-run last 100 features through updated checker. Result: would have caught 3 other latent issues. Adopt the change."

This level requires causal analysis loops on everything that goes wrong, plus measurement of whether the improvements actually work.

For humans, this is a full-time job. Multiple full-time jobs. You need:

- Incident analysis teams

- Process improvement working groups

- A/B testing of process changes

- Retrospectives that lead to action, not just feelings

This is why continuously optimizing organizations are almost mythical. Toyota. NASA. Maybe Google at their peak. Organizations that can afford to dedicate entire teams to process improvement.

Everyone else gets stuck at managed or defined processes.

The Quantitative Management Barrier

So why do organizations get stuck before reaching quantitative management?

Not because they don't know how. The frameworks exist. CMMI, ISO 9001, ITIL—they all spell out exactly what it looks like.

Not because they don't care. Every engineering leader wants data-driven decision making. "If you can't measure it, you can't improve it" is practically a cliché.

They get stuck because quantitative management is boring, tedious, and relentless.

Let's be specific about what it actually requires:

1. Instrumentation on Everything

- Every code commit: who wrote it, when, which checkers it passed/failed, how many retries

- Every deployment: timestamp, duration, success/failure, rollback events

- Every incident: time to detect, time to resolve, root cause category

- Every feature: estimated cost, actual cost, estimated time, actual time

For a 10-person team shipping 50 features/month, that's thousands of data points. Every month. Forever.

2. Continuous Monitoring

- Dashboards that someone actually watches

- Alerting when metrics deviate from baseline

- Investigation when alerts fire (not just acknowledgment)

Who has time to watch dashboards? You're busy building features.

3. Statistical Process Control

- Establishing baselines (mean, standard deviation)

- Detecting statistically significant variation

- Distinguishing signal from noise

How many engineers know statistics well enough to do this correctly? How many want to spend their time doing it?

4. Causal Analysis

- When something goes wrong, don't just fix it—figure out why it went wrong

- Document the root cause

- Change the process to prevent it from happening again

- Measure whether the change actually worked

A thorough root cause analysis takes 4+ hours. For a single incident. If you have 10 incidents a month, that's a week of work. Just for analysis. Not fixing—analysis.

This is why organizations get stuck. Quantitative management requires someone—or multiple someones—to do work that is:

- Brittle (data collection must be perfect, or the statistics are wrong)

- Boring (watching dashboards, running statistical tests, writing analysis reports)

- Buggy (humans miss patterns, forget to collect data, make statistical errors)

We've been trying to climb the maturity ladder with human labor. And the ladder gets too expensive to keep climbing.

What AI Changes

Now here's the shift.

Everything that makes quantitative management hard for humans—instrumentation, monitoring, statistical analysis, causal analysis—is trivial for machines.

Instrumentation? Wrap every pipeline stage with OpenTelemetry. Zero human effort after the initial setup. The pipeline instruments itself.

Continuous monitoring? Prometheus + Grafana with alerting rules. The dashboards never sleep. They never get bored. They never miss a trend.

Statistical process control? Write a script that calculates mean, standard deviation, and control limits on every metric. Run it weekly. Flag outliers automatically.

Causal analysis? When a bug escapes, an LLM reads:

- The bug report

- The code that was deployed

- The checkers that passed/failed

- The prompts that were used

It traces back through the pipeline, identifies which checker should have caught the bug and why it didn't, and recommends a process change.

This used to take a team of humans 4 hours. Now it takes an LLM 30 seconds.

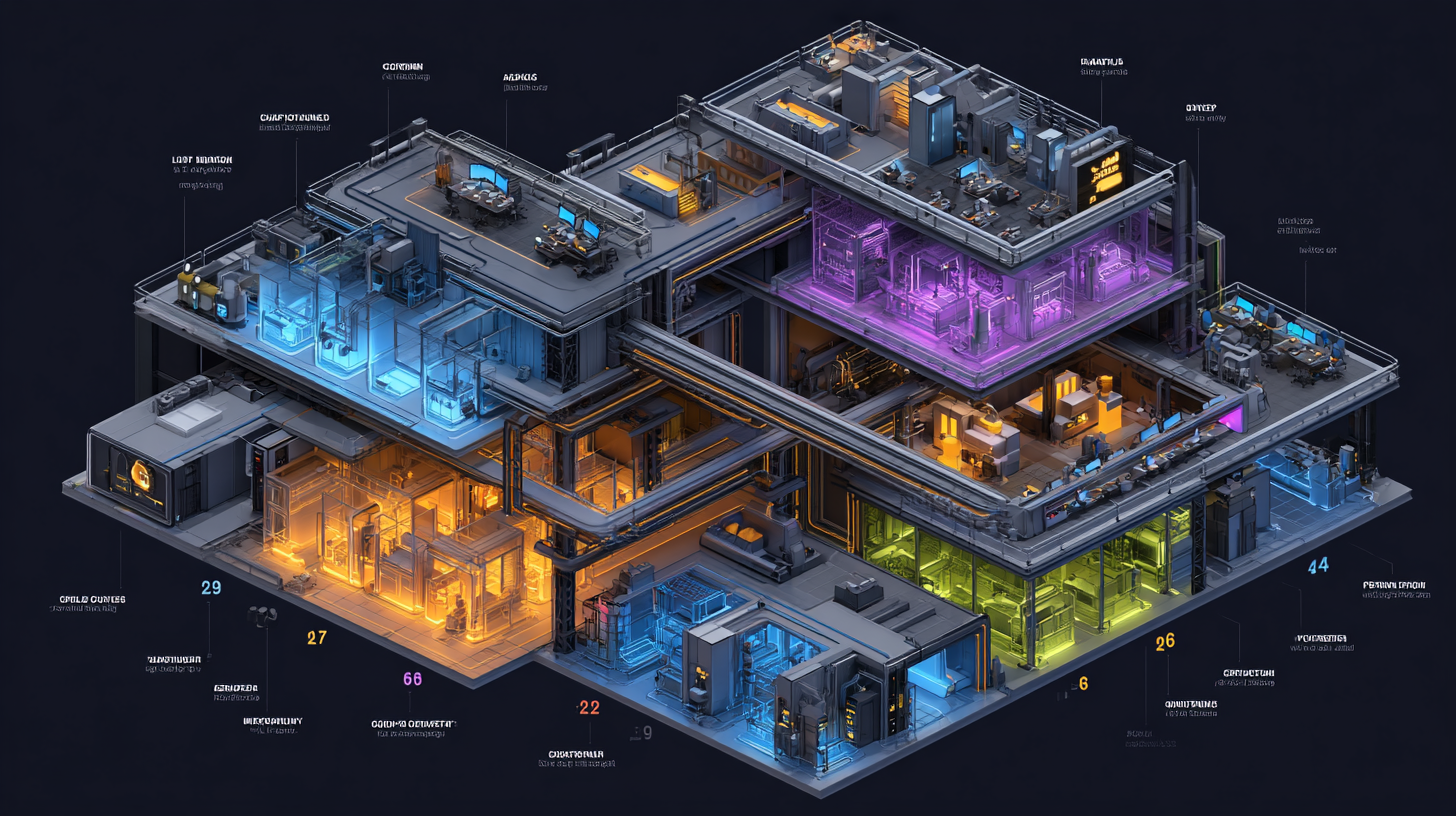

The Factory Maturity Arc

So here's what a well-designed AI factory looks like at each level:

Level 2: Managed (Day 1)

- The pipeline is defined and repeatable

- Basic checks run on every feature

- Results are recorded

Level 3: Defined (Week 1)

- The factory's process is documented (which checkers run, in what order, with what thresholds)

- Tailoring guidelines exist (not every project needs all 20 checkers)

- Verification criteria are formalized (what "pass" means for each checker)

Level 4: Quantitatively Managed (Month 1)

- Pipeline Metrics Collector (#2) is running

- Dashboards show trends: token cost per feature, rework rate, first-pass success rate, time to deploy

- Baselines are established: "A typical CRUD feature costs $5.50 and takes 12 minutes"

- Deviations are detected automatically: "Last week's average was $12.30—investigate"

Level 5: Optimizing (Month 3)

- Escaped Defect Analyzer (#14) performs root cause analysis on every bug that reaches production

- Prompt Effectiveness Tracker (#15) identifies prompts that consistently cause rework

- Factory Self-Assessment Agent (#20) generates weekly health reports with improvement recommendations

- Changes are A/B tested: "New prompt vs. old prompt—which has higher first-pass rate?"

The factory climbs from managed to optimizing in 3 months. Not because you worked harder. Because machines don't get tired of measuring.

The Strategic Advantage

Here's why this matters beyond just "better process":

Elite performers aren't elite because they work harder. They're elite because they improve faster.

DORA's research shows this consistently: the best software organizations don't just ship more frequently or have fewer incidents. They have shorter improvement cycles.

When they spot a problem, they:

- Measure it quantitatively

- Identify root cause systematically

- Test a solution with data

- Roll it out and verify improvement

This is continuous optimization. And it's been the exclusive domain of organizations rich enough to dedicate teams to process improvement.

Until now.

When your factory operates with quantitative management and continuous optimization, you don't just build software faster. You improve faster. Every week, the factory gets a little better at avoiding the mistakes it made last week.

Your competitors are stuck at managed or defined processes, making the same mistakes repeatedly, unable to measure whether they're actually improving.

You're compounding improvements. They're treading water.

The Uncomfortable Question

So here's where we are:

- You've built the checker pipeline (Post 3)

- You understand the maturity ladder (this post)

- You know that quantitative management and continuous optimization is where elite performers live

But what do you actually measure?

What are the metrics that separate elite from average? What numbers do you put on the dashboard? How do you know if $5.50 per feature is good or bad? How do you know if a 2.3 rework rate is high or low?

In the next post, we'll dive into the metrics that matter—the specific numbers that elite performers track, the baselines to compare against, and how to build a dashboard that actually tells you whether you're improving.

Because running quantitative management without knowing what to measure is like having a speedometer that only shows "fast" or "slow." You need the actual numbers.

And the good news? The frameworks exist. The benchmarks exist. The tooling exists. We just need to wire them into the factory.

Next in the series: Measuring What Matters: The Metrics You've Always Wanted — Deployment frequency, lead time, rework rate, and the numbers that separate elite performers from the rest.

Fully Functional Factory

Part 4 of 10

The Anatomy of a Self-Checking System

Measuring What Matters: The Metrics You've Always Wanted

View all posts in this series

- 1.The Best Practices We Abandoned

- 2.Why Machines Don't Get Bored

- 3.The Anatomy of a Self-Checking System

- 4.The Maturity Ladder: Why Organizations Get Stuck

- 5.Measuring What Matters: The Metrics You've Always Wanted

- 6.The Observability Foundation: Watching the Factory Work

- 7.Self-Healing Pipelines: Closing the Loop

- 8.Standing on Giants: The Composable Stack

- 9.Architecture as Code: The Living Architecture

- 10.Rethinking Your AI Tooling Strategy